Agentic AI refers to systems that can autonomously plan and execute tasks to achieve a goal with minimal human supervision. These systems represent a significant operational shift, moving beyond the content generation of generative AI to goal-driven action. The rise of agentic AI is a top strategic technology trend, with Gartner reporting a 1,445% surge in enterprise multiagent system inquiries from Q1 2024 to Q2 2025.

Key Takeaways

Definition: Agentic AI describes intelligent systems that exhibit autonomy, adaptability, and goal-oriented behavior to perform complex, multi-step tasks.

How It Works: Agentic AI operates on a continuous cycle of perceiving its environment, reasoning to create a plan, acting on that plan using available tools, and learning from the outcomes.

Agents vs. Agentic Systems: An AI agent is a specialized tool for a single task, while an agentic system is an architecture that orchestrates multiple agents and tools to achieve a broad, outcome-driven objective.

Cybersecurity Application: In a security operations center (SOC), agentic AI augments analysts by automating alert triage, accelerating investigations, and orchestrating response actions across different security tools.

Core Limitations: Despite its power, agentic AI introduces significant risks related to security, governance, and trust. Adversaries can exploit its capabilities, and without proper guardrails, it can lead to unintended consequences.

Market Growth: The agentic AI cybersecurity market was valued at $22.56 billion in 2024 and is projected to exceed $322 billion by 2033, reflecting a compound annual growth rate of 34.4% (Grand View Research, 2024).

Future Impact: By 2027, 70% of multiagent systems will use narrowly specialized agents, improving accuracy but increasing coordination complexity (Gartner, 2026).

What Is Agentic AI?

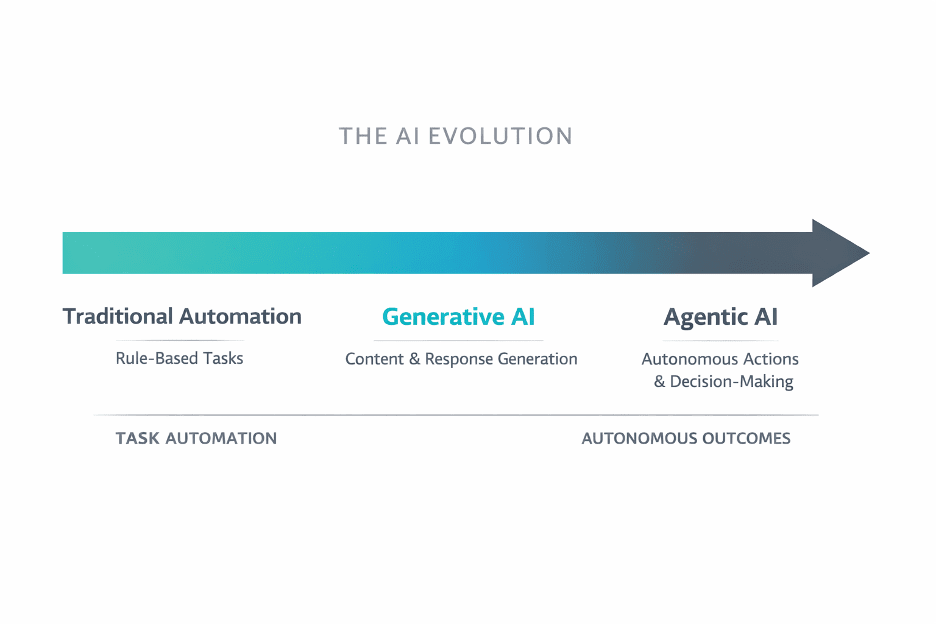

Agentic AI refers to artificial intelligence systems capable of autonomous planning, decision-making, and action to achieve specific goals with limited human intervention. Unlike passive models that only respond to prompts, agentic AI demonstrates proactiveness by breaking down complex objectives into manageable steps and executing them in dynamic environments. This evolution marks a critical shift from task automation to outcome-driven autonomy.

Three Defining Characteristics

True agentic AI is defined by a combination of three core traits that allow it to operate independently and effectively:

Autonomy: Agentic systems can initiate and complete tasks end-to-end without requiring step-by-step human guidance. This enables them to manage complex workflows continuously, such as monitoring a network for threats or managing incident response tickets.

Adaptability: These systems perceive their environment and adjust their behavior based on new information or changing conditions. If an initial plan to isolate a compromised host fails, an agent can adapt its strategy by attempting to block the user account instead.

Goal-Orientation: Agentic AI is driven by objectives, not just instructions. A security analyst can assign a high-level goal, like "Investigate and contain the threat associated with this alert," and the system will determine the necessary actions to achieve that outcome.

The agentic AI market is expanding rapidly as organizations recognize its potential. Nearly two out of three breached organizations lack AI governance policies to manage AI (IBM, 2025). Additionally, Gartner predicts that at least 80% of unauthorized AI transactions in 2026 will stem from internal policy violations rather than external attacks (Gartner, 2026), underscoring that adoption without governance creates risk from within.

How Agentic AI Works

Agentic AI works by following a continuous, cyclical process of perceiving its environment, reasoning to form a plan, acting on that plan, and learning from the results to improve future performance. This operational loop enables an agentic system to move beyond simple, pre-programmed instructions and exhibit autonomous, goal-oriented behavior.

The Agentic AI Operational Cycle

The core of agentic AI can be broken down into a five-step cycle that mimics cognitive processes:

Perception: The system gathers data from its environment through APIs, databases, user inputs, and other integrated tools. For a SOC analyst, this means the agent ingests security alerts, threat intelligence feeds, and logs from endpoints and network devices.

Reasoning: Using a cognitive module, often powered by a large language model (LLM), the AI processes the collected data to understand the context and create a plan to achieve its goal. It breaks down a high-level objective, like "investigate a suspicious login," into a sequence of concrete sub-tasks.

Action: The system executes the plan by interacting with other tools and systems. This could involve querying a SIEM for related events, submitting an IP address to a threat intelligence platform for enrichment, or triggering a SOAR playbook to isolate an endpoint.

Learning: After executing an action, the agent evaluates the outcome and uses feedback to refine its strategies. This reinforcement learning process allows it to become more effective over time, improving its ability to distinguish true threats from false positives.

Reflection/Replanning: In more advanced architectures, the agent can self-reflect on its overall performance, identify flaws in its reasoning, and modify its core plan. If a chosen course of action proves ineffective, the system can pivot to an alternative strategy without human intervention.

Key Architectural Components

An agentic system relies on several integrated components to function:

Large Language Models (LLMs): LLMs serve as the reasoning engine or "brain" of the agent, enabling it to understand natural language, process complex information, and make decisions.

Tool Use: Agents are equipped with a "toolbelt" of APIs that allow them to interact with the outside world, such as security information and event management (SIEM), security orchestration, automation, and response (SOAR), and endpoint detection and response (EDR) platforms.

Memory and State: Agents maintain both short-term memory for immediate context and long-term memory to store knowledge from past interactions, ensuring persistence and learning.

Orchestration: An orchestration layer manages the overall workflow, sequences tasks, and coordinates the actions of multiple specialized agents to achieve a common goal.

AI Agents vs. Agentic Systems

AI agents execute individual SOC tasks; agentic systems orchestrate multiple agents, tools, and workflows to achieve end-to-end security outcomes. Understanding this distinction is critical for developing a security AI strategy that scales effectively.

What Is an AI Agent?

An AI agent is a specialized software capability designed to perform one well-defined task automatically. These agents are the building blocks of broader automation. In security, an AI SOC agent might be designed to take a single indicator of compromise (IOC) like an IP address and enrich it with geolocation and reputation data from a threat intelligence feed. They are efficient, fast, and excellent for repetitive, narrow tasks.

What Is an Agentic System?

An agentic system is an objective-driven architecture that orchestrates multiple AI agents, tools, and workflows to achieve a defined security outcome. Rather than just executing a single task, an agentic system manages the entire lifecycle of a problem. It plans the work, sequences actions across different agents, enforces policies, and decides when to hand off control to a human analyst.

Attribute | AI Agent | Agentic System |

|---|---|---|

Scope | Single, well-defined task | End-to-end workflow or objective |

Goal | Complete one step efficiently | Achieve a defined security outcome |

Autonomy | Executes when triggered | Plans, sequences, and adapts independently |

Tool Use | One tool or API | Orchestrates multiple agents and tools |

SOC Example | Enrich an IP address with threat intel | Investigate, correlate, and recommend containment for a full alert |

Human Role | Analyst manages next steps | Analyst validates outcomes and handles exceptions |

Why the Distinction Matters in a SOC

The difference between agents and agentic systems is the difference between task efficiency and operational scale. One makes a single step faster; the other automates the entire journey.

Consider a typical alert investigation:

An AI agent can improve task efficiency by automatically enriching a suspicious IP address. The analyst still needs to manually review the enriched data, correlate it with other signals, and decide what to do next.

An agentic system enables operational scale by orchestrating the entire investigation. It coordinates multiple agents to enrich indicators, correlate findings with internal logs and endpoint data, analyze the pattern of activity against the MITRE ATT&CK framework, and deliver an outcome-ready assessment that recommends a specific containment action.

While agents help analysts work faster, agentic systems equip the entire SOC to handle a higher volume of threats with greater consistency and depth.

See the full comparison: AI Agent vs. Agentic Systems in Security Operations →

Agentic AI in Cybersecurity

Agentic AI is transforming SOC operations by automating and accelerating threat triage, investigation, and response at machine speed. These systems handle repetitive, data-intensive tasks so analysts can focus on high-stakes decisions—an operational model that augments analysts rather than attempting to replace them.

SOC Automation and Analyst Augmentation

For years, security teams have been burdened by alert fatigue, tool sprawl, and a persistent talent shortage. Agentic AI in cybersecurity directly addresses these challenges by taking on key SOC functions:

Alert Triage: An agentic system can autonomously investigate and triage incoming alerts 24/7. It enriches alerts with context from various tools, closes out false positives, and escalates high-fidelity threats with a complete case file, reducing noise and analyst workload.

Investigation Acceleration: During an investigation, agents can execute complex queries across SIEM, EDR, and cloud logs in parallel, condensing hours of manual data gathering into minutes. This allows analysts to work with a complete picture of an attack from the outset.

Response Orchestration: Once a threat is confirmed, an agentic system can orchestrate response actions across multiple security controls—such as isolating an endpoint via EDR, blocking a domain at the firewall, and disabling a user account in Active Directory—based on pre-approved playbooks.

Threat Detection and Response

Agentic systems fundamentally change the threat detection and response lifecycle. By perceiving threats across the entire security stack and acting on them through integrated tools, they create a more dynamic and resilient defense. An agent can perceive a phishing email, correlate it with a subsequent suspicious login from an unusual location, and act by triggering an MFA prompt or locking the account, all before an analyst has even seen the first alert.

However, an honest trade-off exists: current agentic AI excels at executing well-defined tasks based on known patterns. It requires human judgment and creativity to identify and respond to novel or highly sophisticated attack patterns that fall outside its training data.

Challenges and Limitations of Agentic AI

Agentic AI introduces new operational risks alongside its benefits, with governance, security, and trust gaps that remain largely unsolved. The autonomy that makes these systems valuable also creates novel attack surfaces and challenges traditional security oversight models. Adopting agentic AI requires a clear-eyed assessment of its limitations and risks.

Security Risks of Agentic AI

The very features that make agentic AI powerful—autonomy, tool use, and persistence—also make it a target. Key risks include:

Tool-Use Exploitation: Attackers can manipulate an agent through deceptive prompts or poisoned data to abuse its integrated tools, potentially leading to unauthorized actions, data exfiltration, or remote code execution.

Persistent State Attacks: Because agents maintain memory, an attacker who successfully compromises an agent can establish a persistent foothold, subtly influencing its behavior over time or hijacking its credentials.

Identity and Authorization Challenges: Treating autonomous agents as a new class of identity is critical. Without robust authentication and just-in-time access controls, compromised agents can escalate privileges and move laterally across systems.

Multi-Agent Coordination Failures: In systems with multiple agents, an attacker can poison the communication channels between them, causing cascading failures or manipulating the collective decision-making process.

Governance and Oversight

Governing autonomous systems requires new frameworks. More than 50% of enterprises will adopt AI security platforms by 2028 (Gartner, 2026), reflecting the urgency of this challenge. Security leaders must establish policies and technical guardrails to ensure agentic AI operates safely and aligns with organizational intent. Several frameworks provide a starting point:

Forrester AEGIS Framework — The AEGIS (Agentic AI Enterprise Guardrails for Information Security) framework provides guidance across six domains, including Identity and Access Management, Threat Management, and Data Security, specifically for governing agentic systems.

MITRE ATLAS — Building on the ATT&CK framework, ATLAS (Adversarial Threat Landscape for Artificial-Intelligence Systems) catalogs the tactics and techniques adversaries use against AI systems, helping organizations model threats and design stronger defenses.

NIST AI RMF — The NIST AI Risk Management Framework provides a structured process to map, measure, manage, and govern AI-related risks throughout the system lifecycle.

CISA AI Roadmap — CISA's roadmap outlines a whole-of-agency plan to promote the secure use of AI in critical infrastructure, assure AI systems against threats, and deter malicious use of AI.

The Adversarial Angle

Attackers are already weaponizing AI to automate reconnaissance, scale phishing campaigns, and accelerate attacks. As defensive agentic systems become more common, adversaries will inevitably develop counter-tactics designed to evade or exploit them. Security teams must prepare for AI-driven offensive campaigns that operate at a speed and scale beyond human capability.

Keep up with the latest threat research →

Agentic AI and Human Collaboration

Agentic AI augments analyst capabilities rather than replacing headcount, and the most effective deployments keep humans in the loop for judgment calls, strategic guidance, and exception handling. The goal is to build a partnership where AI handles the machine-scale work and humans provide the critical thinking that machines lack.

This human-machine teaming model frees analysts from routine tasks and empowers them to operate at the top of their license. Instead of manually chasing down alerts, teams validate outcomes, manage exceptions, and guide strategy. This shifts the role of the security analyst from a reactive ticket-closer to a proactive threat hunter and system supervisor.

The SOC functions that benefit most from this collaborative model include:

Routine Monitoring and Triage: Agentic systems can handle the initial, high-volume analysis of alerts, allowing analysts to focus only on validated, high-priority incidents.

Decision Support: During a complex investigation, an agentic system can assemble and summarize all relevant data, presenting a complete dossier to the analyst so they can make a final determination with full context.

Cross-Team Coordination: Agentic AI can automate the handoffs between security, IT, and other teams—for instance, by creating a ticket in IT's system to re-image a machine after the SOC has contained a threat.

Frequently Asked Questions

1. What is the difference between agentic AI and generative AI? Generative AI focuses on creating new content, like text or images, based on prompts. Agentic AI uses generative models as a reasoning engine to go a step further—it autonomously plans and takes actions in the real world to achieve a specific goal.

2. How does agentic AI work in a SOC? In a SOC, agentic AI automates and accelerates security operations. It triages alerts by enriching them with data, investigates potential threats by querying security tools, and orchestrates response actions like isolating an endpoint, all while keeping human analysts in the loop for critical decisions.

3. Will agentic AI replace security analysts? No. Agentic AI augments security analysts rather than replacing them. It handles repetitive, machine-scale tasks, which frees analysts to focus on higher-value work like threat hunting, strategic analysis, and managing complex incidents that require human judgment and creativity.

4. What is the difference between an AI agent and an agentic system? An AI agent is a specialized tool built for a single, narrow task, like enriching an IP address. An agentic system is a broader architecture that orchestrates multiple agents and tools to manage an entire workflow, such as investigating and responding to a threat from start to finish.

5. What frameworks exist for governing agentic AI? Several key frameworks address agentic AI governance: the Forrester AEGIS Framework for enterprise guardrails, MITRE ATLAS for modeling AI-specific threats, the NIST AI Risk Management Framework for managing risks, and CISA's AI Roadmap for securing critical infrastructure.

6. How should organizations evaluate agentic AI platforms? Evaluate platforms based on their ability to support a human-in-the-loop model, the breadth of their tool integrations with your existing security stack, the strength of their governance and oversight controls, and their transparency in how they make decisions and learn over time.

Summary & Next Steps

Agentic AI represents a fundamental evolution in how security operations are run. By shifting from manual task execution to autonomous, outcome-driven workflows, security teams augment their capabilities to meet threats at machine speed. This requires not only adopting new technology but also embracing a new operating model built on human-machine collaboration.

Learn more about purpose-built AI SOC agents

Explore the GreyMatter agentic AI SOC platform

Learn what it takes to build your own AI-driven SOC