In the past year, new AI integration protocols have promised seamless communication and coordinated automation across multiple AI sources without brittle custom code. With major platforms now actively adopting these standards, the promises are becoming production realities. If you're eager to reap the benefits of simplified AI integration, you're not alone. But many teams are deciding they're ready to connect before asking what happens when they do.

Every security team should understand their reason for integrating AI along with the real-world implications that come with it before deciding how—or if—AI should connect.

This guide outlines the risks and validation questions you should consider when evaluating AI-to-AI integration.

Understanding MCP and A2A

As organizations deployed AI-powered capabilities across security operations, a practical problem surfaced: most AI systems were built in isolation. Connecting them to external tools, telemetry sources, and automation workflows required custom code for every integration.

For security teams, maintaining that code at scale quickly became untenable.

Two connection standards emerged to address that friction: Model Context Protocol (MCP) and Agent-2-Agent (A2A). Both have moved beyond early adoption: MCP is now supported by OpenAI, Google DeepMind, and Microsoft, while A2A has grown to over 150 partner organizations since its April 2025 launch.

What is MCP?

Model Context Protocol (MCP), introduced by Anthropic in late 2024, standardizes how AI models connect to external tools and environments. Through MCP servers, AI systems can autonomously retrieve context from data sources, query telemetry, and invoke actions across infrastructure through a common interface.

What is A2A?

Agent-to-Agent (A2A), introduced by Google in 2025, standardizes how autonomous AI agents communicate with one another. Through A2A, agents can discover capabilities, delegate tasks, and coordinate actions across systems.

Both protocols have the potential to solve real integration challenges. But deciding whether to implement one or both should start with a clear understanding of the downstream risks.

MCP and A2A Comparison

Role | MCP: AI-to-Tools | A2A: Agent-to-Agent |

|---|---|---|

Purpose | How an AI system connects to and invokes external tools, APIs, and data sources | How AI systems find, communicate, and coordinate with each other |

Use Case | Single AI system accessing SIEM, EDR, threat intelligence, cloud logs via standardized interface | Multiple specialized AI agents delegating tasks and sharing context to achieve shared objective |

Validation Dependency | Connected tool(s) and AI system(s) must maintain optimal performance and continuous evaluation | Connected AI agent(s) must maintain optimal performance and continuous evaluation |

Key Risk | AI retrieves incorrect data or invokes wrong tools → degraded performance and context quality | Agents with different validation baselines → compounding drift across SOC functions |

Choose This If... | You need one or more AI systems to reliably access your tools | You need AI agents to work together, discover each other's capabilities, and coordinate actions |

4 Downstream Risks to Consider

Connecting AI systems introduces operational risks that scale with every integration you add:

Cascading errors across AI systems. When AI systems operate independently, a bad output stays contained. In connected environments, one system’s error can trigger automated actions that compound a single mistake into a multi-system incident.

Expanded attack surface with every connection. Each MCP server introduces a new tool interface. Each A2A channel creates a new communication pathway. As your integration ecosystem grows, so does the number of entry points an adversary can target.

Reduced auditability across system boundaries. You depend on traceability to understand how decisions were made. When an automated action leads to an unintended result, identifying which system contributed what—and where the failure started—becomes difficult or impossible to piece together.

Sensitive data moving between vendors. AI agents rely on shared context to make impactful decisions. When agents collaborate across environments, that context often moves between platforms and vendors. Most governance frameworks were not designed for machine-to-machine data exchange at this scale, leaving your questions around data ownership, retention, and model training usage unresolved.

6 Questions to Ask Before Connecting AI Systems

Connecting AI systems isn’t inherently risky. But connecting without a clearly defined objective can be. These questions are a good place to start if you're validating whether connecting AI is right for your environment:

Why do we want to connect these systems? Start with a specific operational gap each connection is intended to close.

What measurable results do we expect from connecting? Define success in measurable outcomes such as mean time to detect, mean time to contain, or reduction in Tier 1 and Tier 2 workload.

Does this connection reduce complexity or redistribute it? Adding connections introduces new dependencies, new failure modes, and ongoing maintenance overhead. Assess honestly whether a given integration will solve problems or create them.

Who maintains this architecture long-term? If your integrations depend on one engineer's knowledge of LangChain, MCP configurations, and a custom automation pipeline, you may be creating operational single points of failure.

What visibility exists into the integration itself? Once AI systems are connected, monitoring the output of individual tools isn’t enough. You need clear visibility into the connections themselves to see how data flows between agents, whether connected systems are producing accurate outputs, and how performance changes over time as the integration scales.

How will decisions be audited? You should be able to trace decisions across agents, attribute actions to specific systems, and meet compliance requirements across the full integration chain.

How ReliaQuest Thinks About AI Integration

Connecting AI systems can be powerful, but only when you're clear on why you're doing it and what you're risking. The decision to connect should come after understanding your goals and the downstream risks. If you approach integration deliberately, you'll get more from it than if you connect first and ask questions later.

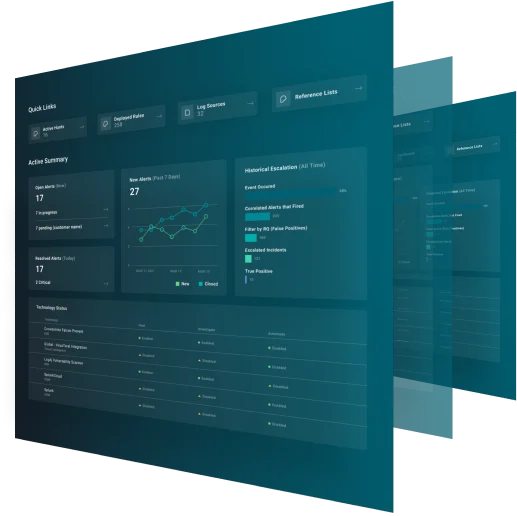

At ReliaQuest, we practice this ourselves. Within GreyMatter, we use both MCP and A2A—each for a specific, defined purpose. Our Agentic Teammates operate on an A2A framework so they can coordinate with each other—delegating tasks, sharing context, and working toward shared investigation outcomes. And GreyMatter uses MCP as the standardized way those agents access tools across the security stack. That's what deliberate integration looks like: each protocol serves a clear operational role, connected because it solves a defined problem.